Integration: Messaging

We have been exploring ways to share customer information between these two applications:

The solutions we've covered so far are:

Web services are a nice way to decouple the applications, because they allow the applications to define and share a contract rather than taking a dependency on implementation details. But they do introduce other forms of coupling, especially around reliability.

Messaging

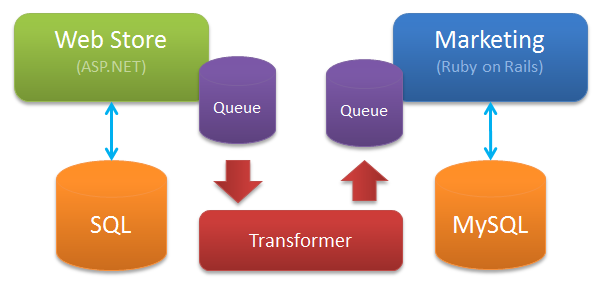

Messaging allows the applications to exchange information, using well defined contracts, asynchronously. The Marketing application would keep its own list of customers, and would accept messages from the Web Store application. Architecturally, it will look like this:

The Web Store team would define the structure of a message - such as CustomerRegistered. They'd probably document not just the structure of the message, but some of the semantics around it (what does "registered" mean?).

The Marketing team would also define a message, such as CreateCustomer, which it would accept, along with the semantics of what "create" means. Note that our tone has changed from describing an event, to describing a command.

Integration using messages

The Web Store would behave like this:

- A user clicks the "register" button of an web page

- The customer is saved locally to the

Web Storedatabase - The

CustomerRegisteredevent is written to the queue.

The queue (generally something like MSMQ, ActiveMQ or RabbitMQ) would ideally be local to the machine Web Store is being served from. Web Store can then continue to process other requests. It doesn't care what happened to the event. It doesn't know how other applications intend to use the events. It just writes to the queue, and moves on.

Somehow, the message will make its way from a local queue on the Web Store machine to a queue on another server running some kind of transformer application. The transformer will handle the CustomerRegistered message, apply integration logic, transform it to a CreateCustomer command message, and write it to a queue destined for the Marketing application.

From the Marketing teams point of view:

- A

CreateCustomermessage lands in a local queue. They have no idea how or why, just that it did. - Code in the

Marketingapplication picks up the message, writes the customer details to the MySQL database - The message is removed from a queue

Note that steps 2 and 3 are typically done within a transaction; we only delete the CreateCustomer message from the queue when the new customer's details are safely committed to the MySQL database.

The transformer

The box I've labelled "transformer" above is a bit of an iceberg. It could be:

- A $200,000 BizTalk installation

- An NServiceBus DLL with a simple handler using pub/sub

- An open source package like Apache Camel

- A C# console app that uses

System.Messagingdirectly - An intern who pastes the message into a Word document, prints it, faxes it to a data entry clerk, who then re-types it into an InfoPath form which emits XML compatible with the Marketing queue

Integration of all of your applications may be centralized or decentralized. Once you start adding many transformations, and you make it easy to expose applications to them, it's generally called a service bus.

The code that lives inside the "transformer" box tends to be pretty predictable, if complicated. Enterprise Integration Patterns is a good book (which I have read) about the kinds of things that happen in this layer.

Advantages

Messaging combines the best of our previous solutions:

- Like the ETL solution, neither application is (from a code point of view) aware of each other, nor do they require the other application to be online in order to function

- Like the Web Services solution, the application can control how requests are processed, and apply domain logic before data makes its way into the inner sanctum that is the database

Messaging can help to make our applications very reliable, since applications are designed to be completely decoupled from each other. They are decoupled not just from a "contract over implementation" point of view, but from an "uptime" point of view. I'm going to explore these coupling concepts more in another post.

Disadvantages

Developers generally have less experience with messaging for integration, so there will be a learning curve. This is also an area swimming with vendor sharks selling pricey products, so if you spend too much time on the golf course you could get stuck with an integration solution you really don't want.

Conclusion

Hopefully this brief tour of integration solutions gives you an idea of how they could apply in the real world.

If you've used messaging for integration, how did it go? If you thought about it but opted for another solution, why?